Brian K. Little

A 3rd grade teacher at Seventy-Fifth Street Elementary in 2008

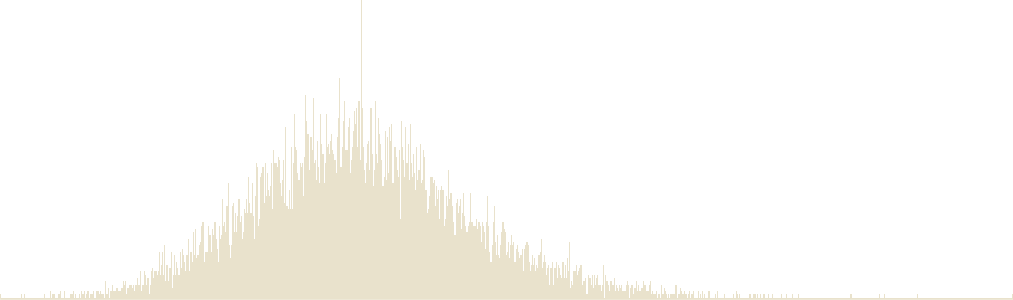

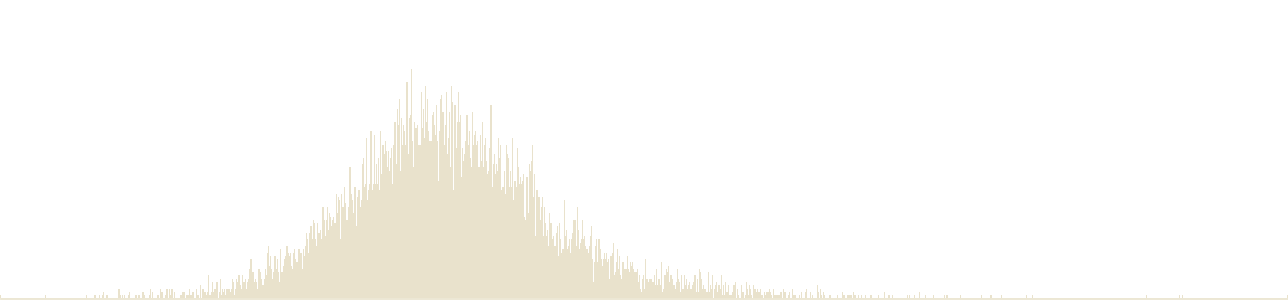

These graphs show a teacher's "value-added" rating based on his or her students' progress on the California Standards Tests in math and English. The Times’ analysis used all valid student scores available for this teacher from the 2003-04 through 2009-10 academic years. The value-added scores reflect a teacher's effectiveness at raising standardized test scores and, as such, capture only one aspect of a teacher's work.

Math effectiveness

English effectiveness

About this rating

The red lines show The Times’ value-added estimates for this teacher. Little falls within the “least effective” category of district teachers in math and within the “least effective” category in English. These ratings were calculated based on test scores from 86 students.

Because this is a statistical measure, each score has a degree of uncertainty. The shading represents the range of values within which Little’s actual effectiveness score is most likely to fall. The score is most likely to be in the center of the shaded area, near the red line, and less likely in the lightly shaded area. Teachers with ratings based on a small number of student test scores will a have wider shaded range.

The beige area shows how the district's 11,500 elementary school teachers are distributed across the categories.

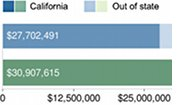

Little's LAUSD teaching history

Years used for value-added rating. See FAQ for details.

- Seventy-Fifth Street Elementary, 2008 - 2004

Brian Little's Response:

To all concerned:

This correspondence is in reference to some results of an 'effectiveness' outcome for teacher scores the L.A. Times intends to publish (again). I received this 'effectiveness' score Friday April 8, 2011 and I have been asked to respond voluntarily.

First of all thank you L.A. Times very much for demonstrating an ability to display raw data with its limitations. Unfortunately, this doesn’t reflect with any accuracy, the effectiveness of me or my teaching. It does show a five year period of administrative turbulence and bias at the site in question. However the scores exhibited here are dismal at best. Again they aren’t accurate. I’d also like to know if our precious tax dollars are funding this research. If so, what is the cost if for the most part the results lack some validity?

I do not intend to make excuses. This L.A. Times study and publication is showing raw data. It is what it is. What is shown is not a teacher effectiveness gauge, but a combination of mismanaged class assignments.

The nature of that dilemma is neither here nor there. The graphical representation though represents the effects of the dilemma.

Any reader must know that any student (high achiever or low achiever) can be transferred into a teacher’s class within months or weeks prior to a state exam. Does the L.A. Times’ researcher Richard Buddin consider that? Should we include students with this transience or their prior knowledge or limited to no prior knowledge?

Should we be teaching students and parents to be self sufficient and responsible for their outcomes? Should they be responsible for their scores as well? Based on these scores this L.A. Times publication exhibits, should I go to each of my past teachers and say that my experience has not led to value added outcomes for my students? Should I label them ineffective? Where does this ridiculous nature end?

If the intent of this display with my name attached is to represent truth and accuracy in teacher effectiveness, I think the prudent thing to do would be to include the LAUSD OCR (Language Arts) Data and Math scores for the past 3 years. In addition, parent surveys may need to be included in this mix as well. If those are included, the graph would be at ‘most effective’ because there is for the most part, an increase in student scores from student entry into my class to student exit. Parent satisfaction would also be a factor for rating effectiveness. Therefore it would show effectiveness and bring the scores up.

The state tests do not incorporate the strategies teachers, like myself, must use. We use learning maps and visual representations, group discussions, etc. to help with comprehension that may not be so available when the tests are given. Math is done with manipulatives but the state test allows sharpened pencils and rulers. This is a rough outline but the point is some items just don’t align with the state test format. Each school may be different but we’re expected not to teach to the test.

We have also used strategies to pinpoint and isolate areas to raise scores by a few points in one area or another. It is a great strategy and could show an increase in a component here or there and perhaps even manipulate data. Is this a value added method? Are we to teach to the test?

Does that really show teacher effectiveness?

Now my question is whether this publication (LATimes and the Richard Buddin findings) has intentions of publishing LAUSD OCR (Language Arts) Data and Math scores for the past 3 years on my behalf (or any teacher who may have switched grades)? If this publication wants to display my name to the public, it should have the decency to be accurate with all assessments that are used. If the focus is simply the state tests, then when this publication publishes its results (as was done last year) it represents inaccuracy and it is misleading. The results are just perpetually the same since I’ve taught a different grade for three years thereafter. There is nothing cumulative about it. It shows a period frozen in time that happened to be a turbulent time at that. Now year after year the same results are shown to the public.

It can work both ways. Think of a teacher who just taught one year of 5th grade with solid outcomes. After that taught elsewhere 'ineffectively' but perpetually looks strong with this type of publication.

Am I to be inaccurately defined by this data indefinitely or perpetually for having to be subjected to a biased administration for the results generated? I hope the parents, friends, students, colleagues, peers, administrators, or anyone with a general interest just understands what the numbers mean. I doubt anyone would consider these numbers to define my effectiveness.

Please keep in mind that teachers aren’t measured effective unless they are teaching grades 3 or 5 in this L.A. Times publication (to the best of my knowledge based on what was presented to me in the email hyperlink I was led to prior to publication). State tests are key in this publication. Why not show every teacher in the district or the state?

Should this publication marginalize my good name (or any teacher for that matter) because I (or any teacher for that matter) was given some questionable students during this timeframe? Can you prove in that timeframe under the same circumstances someone would have been more effective? If so, what are these factors? I’d also love to see the time machine.

Do you or your research team intend to publish effective and ineffective parents? Do you or your research team intend to publish effective and ineffective UTLA representatives? Do you or your research team intend to publish effective and ineffective LAUSD administrators? Is this just finger pointing?

I am all for merit pay but make everyone in the child’s life accountable. Label everyone effective or ineffective or value added or subtracted. Not all teachers get the high achievers. Not all classes will have the same outcomes. Should we go merit pay and attach the pay to the score, I will take the high achievers and the gifted. Who wouldn’t?

I know I am effective and so does the community I represent and serve.

Thank you to any reader for reading this response.

Thank you LA Times for publishing these numbers.

For further reading, consider, ‘Research Study Shows L. A. Times Teacher Ratings Are Neither Reliable Nor Valid’ which shows the simplicity of value added concepts of these attempts from Richard Buddin’s findings (Briggs, 2011). It lacks too many factors.

That may show some balance to this continuous saga.

Sincerely,

Brian Keith Little

References:

Briggs, D., Mathis, W. (2011). Research study shows la times teacher ratings are neither reliable nor valid. Retrieved from

http://nepc.colorado.edu/newsletter/2011/02/research-study-shows-l-times-teacher-ratings-are-neither-reliable-nor-valid

If possible, I may add more prior to publication as time permits.

![]() The Times gave LAUSD elementary school teachers rated in this database the opportunity to preview their value-added evaluations and publicly respond. Some issues raised by teachers may be addressed in the FAQ. Teachers who have not commented may do so by contacting The Times.

The Times gave LAUSD elementary school teachers rated in this database the opportunity to preview their value-added evaluations and publicly respond. Some issues raised by teachers may be addressed in the FAQ. Teachers who have not commented may do so by contacting The Times.

|

|

Delicious

Delicious

|

Digg

Digg

|

Facebook

Facebook

|

Twitter

Twitter

|